The Indian government proposed changes to rules for regulating AI. Proposed rules mandate labelling every type of content, including AI-created photos and videos.

The Indian government has taken the first step. This step is towards regulating AI and stopping its misuse. The Ministry of Electronics and Information Technology (MeitY) proposed new rules. These rules require social media platforms to ensure their users label all AI-generated or altered content. This includes photos and videos. The proposal places the labelling burden on social media firms. However, these companies can flag accounts that do not follow the new regulations. Once these rules are implemented, it will be mandatory to label all AI-created and modified content. The label must clearly state the use of AI.

Conditions for the Label

New rules will require social media companies to post a clear AI watermark. This watermark must be easily visible on the AI content. Its size or duration must exceed 10% of the total content. For instance, an AI-generated video running for 10 minutes must show the AI watermark for one minute. Companies may face action if they show any negligence in this matter.

Time to Submit Suggestions is Until November 6

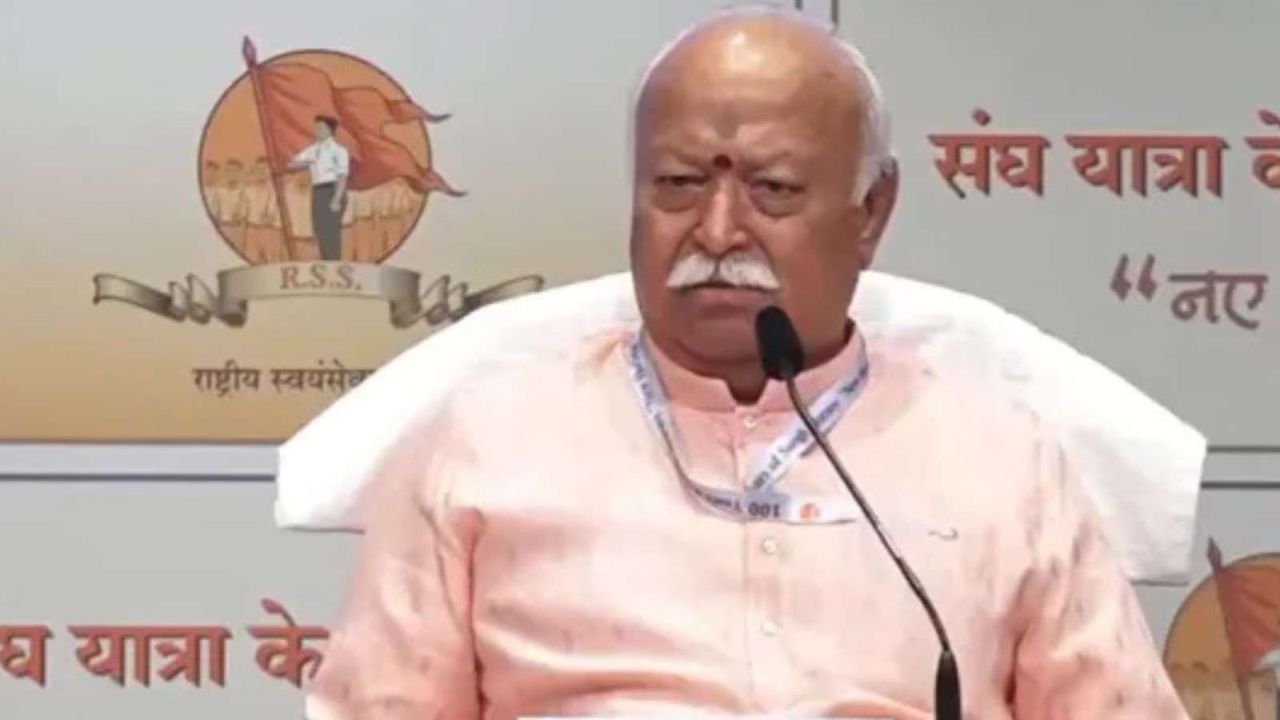

The government has asked for suggestions on the proposed rules. These suggestions are from industry stakeholders. They can be submitted until November 6. Union IT Minister Ashwini Vaishnaw mentioned the rapid increase in deepfake content online. He stated that the new rules will increase accountability. This is for users, companies, and the government. A government official confirmed talks with AI companies. These companies stated that metadata can identify AI content. The responsibility now lies with companies. They must identify and report deepfakes. Under the new rules, companies must include AI content guidelines in their community standards.